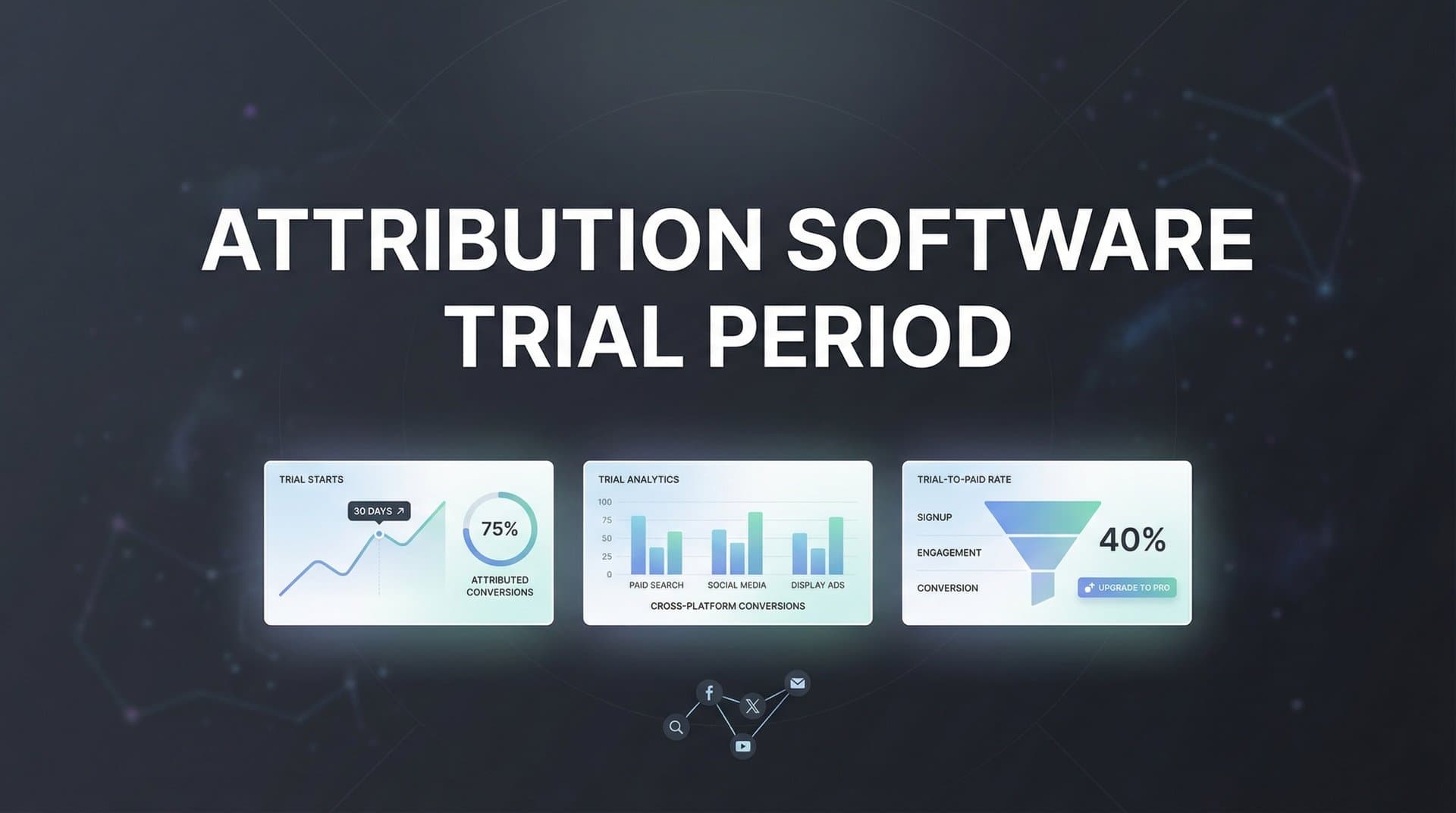

You've got 14 days to figure out if this attribution software will actually solve your tracking problems or just add another dashboard to your stack. The clock starts ticking the moment you sign up, and here's the reality: most marketing teams waste their trial period clicking through features, admiring the interface, and telling themselves they'll "really dig in tomorrow." Then day 13 hits, panic sets in, and they're making a multi-thousand-dollar decision based on whether the UI looked clean and the sales rep seemed nice.

The stakes are higher than you think. Choose the wrong attribution platform, and you're not just wasting money on software—you're making budget decisions based on incomplete or inaccurate data. You might scale campaigns that aren't actually profitable, cut channels that are secretly driving conversions, or completely miss the customer journey patterns that could transform your marketing strategy.

This guide will show you how to run an attribution software trial like a professional evaluator, not a casual browser. We'll cover the specific tests you need to run, the red flags that should make you walk away, and the questions that separate marketing-friendly platforms from data theater. By the end, you'll know exactly how to use your trial period to make a confident, data-backed decision about which attribution platform deserves your business.

Why Your Trial Period Strategy Matters More Than the Software Itself

Here's what separates a useful trial from a wasted two weeks: having a clear evaluation plan before you even create your account. Most marketers approach trials reactively—they sign up, poke around the dashboard, maybe connect one ad account, and hope insights magically appear. That's not evaluation. That's tourism.

An active trial starts with specific questions you need answered. What's the real customer journey for your $200+ orders? Which touchpoints actually contribute to conversions versus just being present in the data? Can you trust this platform's numbers enough to shift 20% of your budget based on its recommendations? Write these questions down before day one.

The biggest trial mistake is focusing on interface polish instead of data accuracy. Yes, a clean dashboard matters for daily use, but a beautiful UI displaying wrong data is worse than a clunky interface showing the truth. Many platforms invest heavily in making their trials look impressive—pre-loaded demo data, slick visualizations, smooth onboarding flows. None of that tells you whether the platform will accurately track YOUR customer journeys.

Another common trap: not connecting real data sources during the trial. Some marketers hesitate to link their actual ad accounts and CRM during evaluation, worried about data security or setup time. This is backwards. You cannot evaluate attribution accuracy using demo data or hypothetical scenarios. The entire point of the trial is to see how the platform handles your specific tracking challenges, your conversion events, your customer journey complexity.

Waiting until the last day to seriously evaluate is trial suicide. Attribution data needs time to accumulate. If you spend the first ten days "getting familiar" and only run serious tests in the final 48 hours, you won't have enough data to validate accuracy. Customer journeys often span multiple days or weeks—you need the full trial period to capture meaningful patterns.

Set your success criteria upfront: "By the end of this trial, I need to know if this platform can accurately track conversions from Facebook, Google, and email within 5% of my actual numbers. I need to see at least three actionable insights about channel performance I didn't know before. And I need confidence that my team can use this daily without constant support tickets." Specific, measurable, achievable within the trial timeframe.

Essential Features to Test During Your Attribution Software Trial

Start with data integration capabilities, and test them with your actual accounts—not later, not "once we commit," but during the trial. Connect your primary ad platforms, your CRM, and your website tracking on day one. This immediately reveals whether the platform's "seamless integrations" marketing copy matches reality.

Watch for integration depth, not just integration existence. Does the Facebook Ads connection pull campaign data, ad set performance, and individual ad creative metrics? Or does it just show basic spend and click numbers you could get from the platform itself? Real attribution requires granular data. If the integration feels surface-level during trial, it won't magically improve after you pay.

Test the setup process honestly: could your marketing coordinator handle this without engineering support? Many attribution platforms claim "no-code setup" but actually require custom JavaScript, webhook configuration, or API key management that demands technical expertise. If you're struggling during the trial with dedicated support, imagine the frustration when you're a regular customer with a support ticket queue.

Attribution model flexibility separates serious platforms from basic analytics tools. During your trial, you should be able to view the same conversion data through multiple attribution lenses: first-touch (what started the journey), last-touch (what closed the deal), and multi-touch models that credit all touchpoints. Run the same date range through different models and see how the story changes.

This comparison reveals critical insights. If first-touch attribution shows Facebook driving most conversions, but last-touch shows Google dominating, you're seeing the difference between awareness channels and closing channels. Both matter. Platforms that force you into a single attribution view are making strategic decisions for you—and they might be wrong for your business model. Understanding multi-touch attribution modeling software capabilities is essential before committing to any platform.

Real-time tracking accuracy is your most important trial test. Run controlled experiments: make a purchase on your own site, click your own ads (from different devices/browsers to avoid skewing data), submit lead forms. Then verify the platform captured every touchpoint correctly. Check timestamps, source attribution, conversion values. Even small discrepancies here signal bigger problems at scale.

Pay special attention to cross-device and cross-browser tracking. The customer journey rarely happens in one session anymore. Someone sees your Instagram ad on mobile during lunch, researches on their work computer that afternoon, and converts on their home laptop that evening. Can the platform connect those dots? Test this scenario during your trial with real user behavior, not assumptions.

Server-side tracking has become non-negotiable for accurate attribution. Browser-based tracking alone misses conversions due to ad blockers, iOS privacy features, and cookie restrictions. During your trial, verify whether the platform offers server-side tracking and test its implementation. This is especially critical if you run significant iOS traffic or privacy-conscious audiences.

Reporting depth matters for daily decision-making. Can you drill down from campaign performance to individual ad creative? Can you segment by customer value, not just conversion count? Can you export data for custom analysis or presentations to stakeholders? Test the reporting features you'll actually use weekly, not just the impressive dashboards shown in demo videos.

Building Your Trial Evaluation Checklist

Week one is integration week. Your priority is getting data flowing correctly, not analyzing it yet. Connect all your primary marketing platforms—ad accounts, CRM, email marketing, website tracking. Configure your conversion events properly: purchases, leads, sign-ups, whatever matters for your business. Set up team member access so colleagues can explore alongside you.

Verify data flow within the first 48 hours. Don't wait a week to discover that your Facebook integration isn't pulling data correctly or your conversion tracking has a configuration error. Run test conversions, check that they appear in the platform, confirm the attribution looks accurate. Fix any issues immediately while you still have trial time to evaluate the corrected setup.

Document your integration experience honestly. How long did setup actually take? How many support tickets did you need to open? Were the documentation and guides helpful, or did you spend hours troubleshooting? This experience predicts your future with the platform—if basic setup was painful, ongoing management will be too. Understanding the attribution software implementation cost in terms of time and resources helps set realistic expectations.

Week two shifts to evaluation and comparison. Now that data is flowing, start running attribution analyses. Compare the platform's conversion numbers against your actual sales data or CRM records. Small discrepancies are normal (different attribution windows, processing delays), but if you're seeing 20%+ gaps, that's a red flag requiring explanation.

Test different attribution models against each other. Look at your top campaigns through first-touch, last-touch, and multi-touch lenses. Which channels look stronger or weaker depending on the model? This exercise reveals both the platform's capabilities and insights about your actual marketing mix. If all models show identical results, the platform might not be doing sophisticated attribution.

Evaluate reporting depth during week two. Build the reports you'll actually need for weekly marketing reviews. Can you easily create a dashboard showing ROAS by campaign? Can you segment performance by customer lifetime value? Can you identify which ad creatives perform best at different funnel stages? Test the reporting features that match your real workflow.

Final assessment happens in the last few days of your trial. This is when you step back from feature testing and ask strategic questions. Could your team use this platform daily without frustration? Do you trust the data enough to make significant budget decisions based on it? Have you discovered insights during the trial that you couldn't see before?

Calculate potential ROI based on trial insights. If the platform revealed that one of your channels has 40% better ROAS than you thought, what's that worth in budget optimization? If you discovered a customer journey pattern that could improve conversion rates by 15%, what's the revenue impact? Using an attribution software ROI calculator can help quantify whether the platform cost is justified by the decision-making improvement it enables.

Red Flags That Signal a Platform Isn't Right for You

Integration limitations during trial are deal-breakers, not minor inconveniences. If connecting your primary ad platforms feels hacky—requiring manual CSV uploads, third-party connectors, or workarounds—that friction won't disappear after you pay. Some platforms market "integrations" that are actually just basic API connections requiring significant manual work. If it's painful now, it'll be worse at scale.

Watch for missing integrations that matter to your stack. If you run significant LinkedIn campaigns but the platform treats LinkedIn as an afterthought with limited data pull, you'll be making decisions with incomplete information. The platform might be excellent for Facebook and Google advertisers, but wrong for your specific channel mix. Don't convince yourself you'll "make it work"—you won't.

Data discrepancies you can't explain are the biggest red flag of all. Some variance between attribution platforms and ad platform reporting is expected—different attribution windows, processing delays, how each system handles view-through conversions. But if your platform shows 100 conversions and your actual sales records show 150, and support can't explain the 33% gap, walk away. Understanding the difference between Google Analytics vs attribution software can help you identify whether discrepancies are normal or problematic.

Test data accuracy with known ground truth. Make controlled purchases, submit test leads, run campaigns where you know the exact results. If the platform can't accurately track these controlled scenarios, it definitely can't handle the complexity of real customer journeys. Accuracy problems during trial only get worse with scale and complexity.

Support responsiveness during trial predicts your future customer experience. Many platforms provide white-glove support during trials—dedicated onboarding specialists, fast response times, proactive check-ins. That's expected. What matters is the quality of their answers and their willingness to acknowledge limitations. If they deflect tough questions, blame other platforms for discrepancies, or promise features "coming soon," that's your preview of post-sale support.

Pay attention to how support handles problems. Do they actually solve issues, or just send documentation links? When you report a data discrepancy, do they investigate thoroughly or dismiss it as "normal variance"? The best support teams during trial are honest about limitations, transparent about how features work, and genuinely helpful even when the answer is "our platform might not be the best fit for your specific use case."

Platform performance issues during trial shouldn't be ignored. If dashboards load slowly, reports time out, or the interface feels sluggish with just your trial data, imagine the performance with months of accumulated data and multiple team members accessing simultaneously. Performance problems rarely improve—they usually get worse as your data volume grows.

Questions to Ask Before Your Trial Ends

Technical capabilities often hide in the details. Ask about server-side tracking implementation: Is it included in your pricing tier? Does it require developer resources to set up? How does it handle the specific conversion events that matter to your business? Many platforms advertise server-side tracking but limit it to enterprise plans or charge extra for implementation support.

Data retention policies affect your long-term analysis capabilities. How long does the platform store your raw attribution data? Can you access historical data for year-over-year comparisons? Some platforms delete detailed data after 90 days, keeping only aggregated reports. If you need to analyze seasonal patterns or long sales cycles, short retention windows limit your strategic capabilities.

API access matters if you want custom integrations or data exports. Can you pull attribution data into your own data warehouse? Can you build custom dashboards in other tools using the platform's data? Platforms that lock you into their reporting interface limit your flexibility. Ask about API rate limits, documentation quality, and whether API access costs extra.

Pricing transparency should be crystal clear before trial end. What's the actual cost after trial, including all the features you tested? Are there usage-based charges for tracked events, connected ad accounts, or team members? What happens if you exceed limits—do they throttle service or bill overages? Get specific pricing for your projected usage, not just the advertised base price. Reviewing attribution software pricing plans across multiple vendors helps you understand what's reasonable for your needs.

Contract flexibility reveals how confident the platform is in their value. Are you locked into annual contracts, or can you start month-to-month? What's the cancellation process if the platform doesn't work out? Some platforms require 90-day cancellation notices or charge early termination fees. Others let you cancel anytime. The more flexible the terms, the more confident they are you'll stay because of value, not contracts. An attribution software monthly subscription option often indicates vendor confidence in their product.

Ask what happens to your trial data after you sign up. Does it carry over seamlessly, or do you start fresh? If you've spent two weeks getting data flowing correctly, you don't want to lose that setup and start over. Clarify whether your trial workspace becomes your production workspace or whether migration is required.

The success validation question is the most important: can you point to at least one actionable insight the trial revealed about your marketing spend? Not "the dashboard looks nice" or "the data seems accurate," but an actual strategic decision you could make based on what you learned. If you can't identify concrete value after two weeks of testing, the platform probably isn't delivering what you need.

Making the Final Call: Commit, Extend, or Walk Away

Score your evaluation criteria objectively, not emotionally. Before your trial started, you defined success criteria—data accuracy requirements, specific features needed, integration capabilities, team usability standards. Now grade the platform against each criterion honestly. Did it meet your accuracy threshold? Can your team actually use it daily? Did you discover insights worth the platform cost?

Don't let sunk cost bias push you toward a wrong-fit platform. The time you invested in the trial is gone whether you sign up or not. The question isn't "should I waste the effort I put into this trial?" but "will this platform improve my marketing decisions enough to justify ongoing cost?" If the answer is no, the trial did its job—it saved you from a bad long-term commitment.

Sometimes the right call is requesting a trial extension. Legitimate reasons include: needing more data accumulation time to validate accuracy (especially for longer sales cycles), waiting for a specific campaign to run so you can test attribution, or wanting your full team to evaluate before committing. Most platforms will extend trials for valid reasons, especially if you've been actively using the platform and providing feedback.

What's not a legitimate extension reason: procrastination, hoping you'll magically become more certain with a few extra days, or avoiding the decision. If you haven't used the trial productively so far, more time won't help. Extension requests should come with a specific plan: "I need five more days to run our Black Friday campaign through the platform and verify attribution accuracy on our highest-volume weekend."

The confidence test is your final decision filter: would you trust this platform's data to make a significant budget decision today? Not theoretically, not eventually, but right now. If you saw data suggesting you should shift $10,000 from Google to Facebook based on the platform's attribution, would you do it? If your gut answer is "I'd want to verify that elsewhere first," you don't trust the platform enough to pay for it.

Consider the alternative cost of not having better attribution. If you walk away from this platform, what's your plan? Continue making decisions based on last-click attribution in Google Analytics? Rely on ad platform reporting that takes credit for everything? Guess at channel performance? Sometimes the question isn't whether a platform is perfect, but whether it's significantly better than your current alternative. A thorough marketing attribution software comparison helps you understand where each option stands.

Make your decision before the trial deadline creates artificial urgency. Many platforms design trials to end right before the weekend or use countdown timers to create pressure. Don't let manufactured urgency force a decision. If you need an extra day to think through your evaluation, take it. The right platform will still be there Monday morning.

Your Trial Period Is Your Best Investment Protection

An attribution software trial isn't about learning where buttons are or admiring dashboard design. It's your opportunity to pressure-test whether a platform can actually solve your marketing attribution challenges with your data, your campaigns, your team. The goal is validation, not exploration.

The most successful trial evaluations start with clear success criteria, connect real data sources immediately, and test accuracy rigorously throughout the trial period. They treat the trial like a job interview—the platform is applying to become a critical part of your marketing infrastructure, and you're the hiring manager checking references and validating claims.

Remember that choosing the wrong attribution platform costs more than the subscription fee. It costs time spent making decisions based on inaccurate data, budget wasted scaling campaigns that aren't actually profitable, and opportunity cost from missing insights that could transform your marketing performance. A thorough trial evaluation is your protection against these expensive mistakes. Exploring marketing attribution software features before starting your trial gives you a framework for what to test.

Approach your next attribution software trial with a structured evaluation plan, specific questions that must be answered, and the confidence to walk away if the platform doesn't meet your standards. The right attribution platform should earn your business by delivering accurate, actionable data that improves your marketing decisions from day one.

Ready to elevate your marketing game with precision and confidence? Discover how Cometly's AI-driven recommendations can transform your ad strategy—Get your free demo today and start capturing every touchpoint to maximize your conversions.