You're staring at your attribution dashboard. The numbers look great—conversions are up, ROAS is strong, and every channel claims credit for success. Then your CFO walks in with the question that makes every marketer's stomach drop: "But would these customers have bought from us anyway?"

It's the question attribution models can't answer. They'll tell you which ads people saw before converting, but they won't tell you if those ads actually caused the conversion. That's the difference between correlation and causation—and it's why smart marketers are turning to incrementality testing.

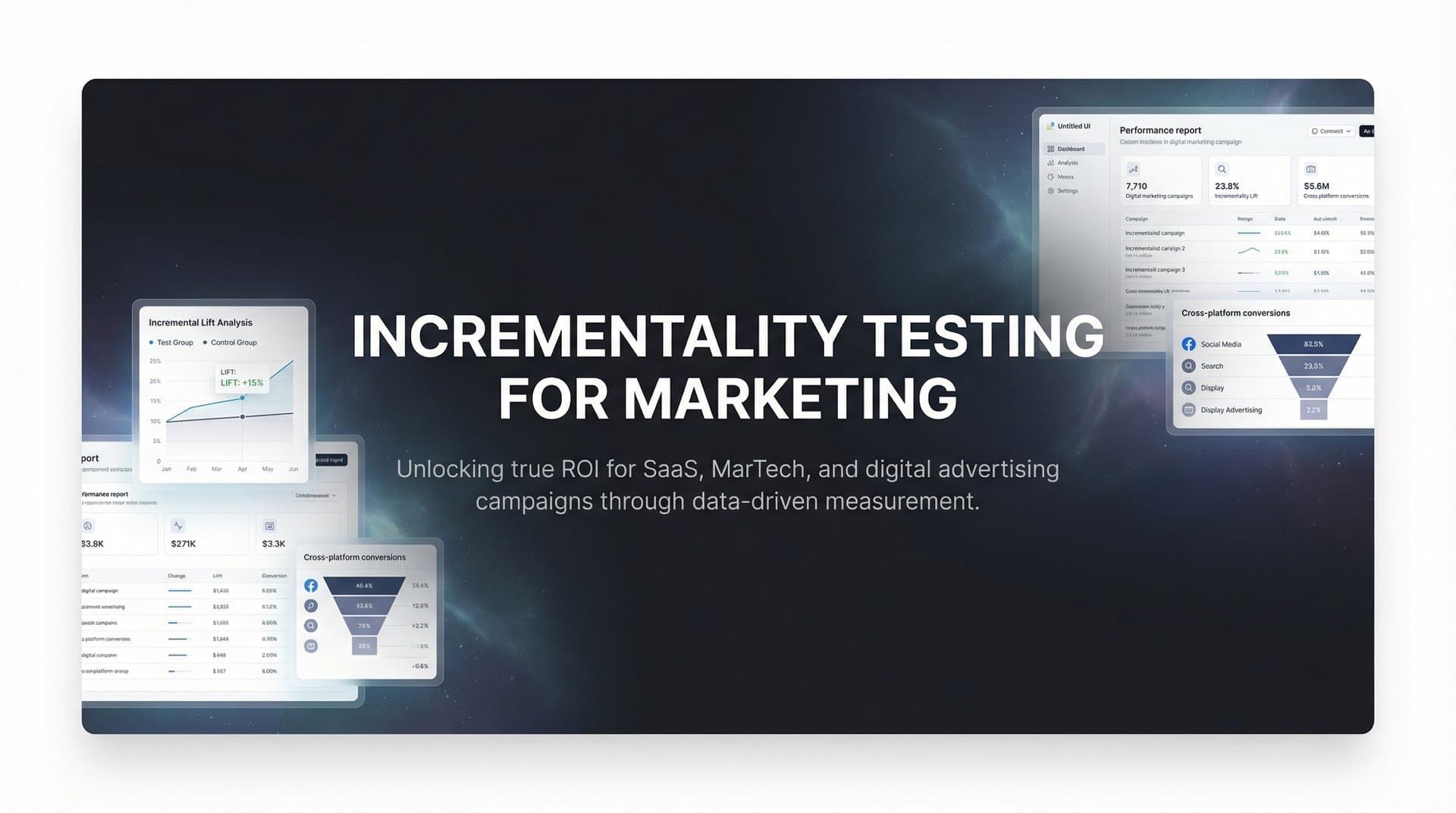

Incrementality testing is the scientific method that finally answers the "would it have happened anyway?" question. Instead of tracking what people did after seeing your ads, it proves what wouldn't have happened without them. This article will show you how to design, execute, and interpret incrementality tests that transform your marketing from educated guessing into evidence-based decision making.

The Science Behind Measuring True Marketing Lift

Incrementality testing is a controlled experiment that compares two groups: people exposed to your marketing and people who weren't. The difference in conversion rates between these groups reveals your marketing's causal effect—the lift you actually created.

Think of it like a clinical drug trial. Researchers don't just give everyone the medication and track who gets better—they need a control group that receives a placebo. Only by comparing the two groups can they isolate the drug's true effect. Marketing works the same way.

The key metric is incremental lift: the percentage of conversions that would NOT have occurred without your marketing intervention. If 1,000 people in your exposed group convert and 700 people in your unexposed control group convert, your incremental lift is 300 conversions—a 43% lift over baseline. Those 300 conversions are what your marketing actually caused.

This fundamentally differs from attribution models. Attribution tells you which touchpoints get credit based on rules or algorithms—last click, first click, linear, time decay, or data-driven models. But attribution assumes every touchpoint contributed value. It can't tell you if removing a touchpoint would actually change outcomes. Understanding multi-touch marketing attribution helps clarify why these models have inherent limitations.

Incrementality testing makes no assumptions. It directly measures what happens when you remove marketing from the equation. If your brand search campaign shows a 10x ROAS in attribution but only 1.5x incremental ROAS in testing, you've discovered that most of those "conversions" would have happened anyway—people were already searching for your brand.

The power of incrementality lies in answering counterfactual questions. What would have happened in an alternate reality where you didn't run that campaign? You can't observe that alternate reality directly, but you can create it through controlled experiments. Your holdout group becomes that alternate reality.

When Standard Attribution Falls Short

Attribution models work well for daily optimization, but they systematically mislead you in specific scenarios. Understanding when attribution breaks down helps you know when incrementality testing becomes essential.

Consider brand awareness campaigns. You run YouTube video ads to introduce your product to new audiences. The campaign generates millions of impressions, but your attribution report shows minimal conversions—maybe just a handful of view-through conversions. Leadership questions the spend.

Here's what attribution misses: those YouTube viewers don't convert immediately. They become aware of your brand, then weeks later they search for your product category, see your brand in results, click a Google Ad, and convert. Attribution credits the Google Ad with a last-click conversion. The YouTube campaign that made them aware of your brand gets zero credit.

An incrementality test reveals the truth. When you withhold YouTube ads from a control group, you discover that group searches for your brand 40% less frequently and converts 25% less overall. Your brand campaign was driving significant lift—attribution just couldn't see it because the conversion path was indirect.

Retargeting campaigns present the opposite problem. Your attribution report shows retargeting with an impressive 8x ROAS. You're targeting people who visited your product pages but didn't purchase, showing them ads across the web, and they're converting at high rates.

But incrementality testing often reveals that retargeting captures demand rather than creating it. When you withhold retargeting ads from a control group, many of those high-intent users convert anyway—they return directly to your site or search for your brand. Your actual incremental ROAS might be closer to 2x, meaning 75% of those "retargeting conversions" would have happened without the ads.

The third scenario where attribution misleads involves cross-channel overlap. A user sees your Facebook ad, clicks but doesn't convert. The next day they see your Google Display ad, click but doesn't convert. Two days later they search your brand name, click your Google Search ad, and purchase.

Most attribution models credit only the final search click. Some give partial credit to earlier touchpoints. But none can tell you which channels actually mattered. Did the Facebook ad create initial awareness? Did the display ad reinforce it? Or would the user have searched for your brand anyway based on word-of-mouth or organic discovery?

Incrementality testing answers these questions by testing channels in isolation. You might discover that Facebook drives significant incremental brand searches (proving its awareness value), while display retargeting shows minimal incrementality (proving it's mostly capturing existing demand). This transforms how you allocate budget across channels. For deeper insights into incrementality testing for paid advertising, consider how each channel contributes differently to your overall strategy.

Designing Your First Incrementality Test

The most common incrementality testing method is the holdout group approach. You randomly divide your target audience into two groups: an exposed group that sees your ads normally, and a control group that doesn't see your ads at all. After running the test for a sufficient duration, you compare conversion rates between groups.

Randomization is critical. If you choose your control group based on any characteristic—geography, demographics, past behavior—you introduce bias that invalidates your results. The control group must be a random sample from the same audience pool as your exposed group. Most ad platforms offer built-in tools for creating random holdout groups within your targeting parameters.

The size of your holdout group involves a tradeoff. Larger holdout groups provide more statistical confidence but mean withholding ads from more potential customers. Most marketers start with a 10% holdout—large enough for reliable results while minimizing opportunity cost. If you're testing a high-volume channel, you might use a 5% holdout. For lower-volume channels, you might need 20% or more.

Calculating the minimum sample size requires understanding your expected conversion rate and desired sensitivity. If your baseline conversion rate is 2%, you need enough people in each group to detect meaningful differences. A general rule: aim for at least 1,000 conversions in your exposed group during the test period. This provides enough statistical power to detect a 10-20% lift with confidence.

Test duration depends on your conversion cycle. If most customers convert within days of first exposure, a two-week test might suffice. If your sales cycle spans weeks or months, you need a longer test period to capture the full effect. A common mistake is ending tests too early, before your marketing's full impact materializes.

For businesses with longer consideration cycles, consider running your test for at least one full purchase cycle plus a margin. If your average customer takes 30 days from first awareness to purchase, run your test for 45-60 days. This ensures you're measuring complete conversions, not just early indicators.

Geo-testing offers an alternative approach when holdout groups aren't feasible. Instead of randomly withholding ads from individuals, you run campaigns in some geographic markets while keeping similar markets as controls. You might run Facebook ads in Chicago, Dallas, and Seattle while withholding them from Detroit, Phoenix, and Portland—markets with similar characteristics.

Geo-testing works well for brand awareness campaigns and channels where individual-level holdouts are difficult. The challenge is ensuring your test and control markets are truly comparable. You need similar market sizes, demographics, competitive dynamics, and seasonality. Even small differences can skew results.

A sophisticated geo-testing approach uses synthetic controls—creating a weighted combination of multiple control markets that best matches your test market's historical patterns. If your test market's baseline conversion rate is 2.5%, you combine control markets in proportions that also average 2.5% historically. This reduces the risk that market differences confound your results. Leveraging data science for marketing attribution can help you build more accurate synthetic control models.

Whatever method you choose, document your test design before launching. Specify your hypothesis, test and control groups, duration, primary metrics, and statistical significance threshold. This prevents the temptation to cherry-pick results or extend tests until you see the outcome you want. Rigorous test design separates science from wishful thinking.

Reading the Results: From Data to Decisions

Once your test completes, the analysis begins with a simple calculation: incremental conversions divided by incremental spend equals incremental ROAS. If your exposed group generated 1,500 conversions and your control group generated 1,000 conversions (scaled to the same size), you created 500 incremental conversions. If you spent $50,000 on the campaign, your incremental cost per acquisition is $100.

Compare this to your standard CPA calculation. If you spent $50,000 and saw 1,500 conversions in your exposed group, your reported CPA is $33. But your incremental CPA is $100—three times higher. This gap reveals that two-thirds of those conversions would have happened without your ads. They were correlation, not causation.

This reality check surprises many marketers. Channels that look incredibly efficient in attribution often show modest incrementality. The difference is especially stark for lower-funnel tactics like brand search and retargeting, which capture high-intent users who were already likely to convert. Understanding digital marketing performance metrics helps you interpret these differences more accurately.

Before acting on results, verify statistical significance. The standard threshold is 95% confidence, meaning there's less than a 5% chance your observed difference occurred by random chance rather than your marketing's true effect. Most statistical calculators or A/B testing tools provide this automatically.

If your results don't reach statistical significance, you have three options: run the test longer to gather more data, increase your sample size by expanding the test, or accept that you can't detect a meaningful effect with your current setup. Avoid the temptation to declare victory based on directionally positive but non-significant results—that's how bad decisions get made.

When results show low incrementality, resist the urge to immediately cut the channel. First, consider whether your test design might have underestimated impact. Did you test long enough to capture delayed conversions? Did your control group receive spillover exposure through other channels? Could cross-device behavior have hidden some conversions?

Second, evaluate the strategic value beyond direct conversions. A brand awareness campaign might show modest short-term incrementality but build long-term brand equity that compounds over time. Upper-funnel channels often create value that materializes in later conversions attributed to other touchpoints. Exploring alternative metrics for assessing marketing success can reveal value that standard incrementality tests might miss.

The most actionable insight comes from comparing incrementality across channels. You might discover that Facebook shows 3x incremental ROAS while Google Display shows 1.2x. This doesn't mean you cut Display entirely—but it does mean you should shift budget toward Facebook until its incremental returns diminish. Incrementality testing reveals which channels deserve more investment and which have reached their efficient frontier.

For channels showing strong incrementality, the next question is: how much can you scale before returns diminish? Incrementality isn't constant. As you increase spend, you exhaust your most responsive audience segments and reach diminishing returns. Plan to retest incrementality periodically as you scale, especially after major budget increases.

Building Incrementality Into Your Attribution Strategy

Attribution and incrementality aren't competing approaches—they're complementary tools that work best together. Attribution provides the daily optimization signals you need to manage campaigns efficiently. Incrementality validates your strategic assumptions and calibrates your attribution models periodically.

Use incrementality testing results to improve your attribution model's accuracy. If incrementality testing reveals that your brand search campaigns show 70% lower incremental value than attributed value, you can adjust how your attribution model weights brand search touchpoints. This creates a hybrid approach: attribution informed by incrementality insights.

Some sophisticated marketers create "incrementality-adjusted attribution" models. They run incrementality tests on key channels quarterly, then apply adjustment factors to their daily attribution reporting. If Facebook shows 80% of its attributed value is truly incremental while retargeting shows only 30%, they multiply attributed conversions by these factors to estimate true incremental impact. Using a cross-platform marketing analytics dashboard makes managing these adjustments across channels much more practical.

Develop a testing cadence based on your budget and channel mix. Start by testing your highest-spend channels—these have the biggest impact on overall marketing efficiency. If you spend 40% of your budget on Facebook, understanding Facebook's incrementality should be your first priority. Test each major channel at least once, then retest annually or after significant strategy changes.

For channels with consistent performance, annual incrementality validation suffices. For channels where you're actively experimenting—testing new creative approaches, expanding to new audiences, or significantly scaling spend—test incrementality more frequently. Major changes in strategy can shift incrementality significantly.

Create a testing calendar that staggers incrementality tests across channels. Don't test everything simultaneously—you'll struggle to manage multiple experiments and might create interactions that confound results. Test one major channel per quarter, ensuring you have clean reads on each channel's true impact over time.

Document your incrementality findings in a centralized repository. Track not just the headline numbers but the context: what audience were you testing, what creative were you running, what was happening in your market at the time. These details help you interpret results and identify patterns across tests. Implementing best practices for using data in marketing decisions ensures your incrementality insights translate into better outcomes.

The most mature marketing organizations integrate incrementality into their regular planning cycles. When evaluating next quarter's budget allocation, they review recent incrementality test results alongside attribution data. Channels showing strong incrementality get increased investment. Channels showing weak incrementality face scrutiny—are there optimizations that could improve incrementality, or should budget shift elsewhere?

Putting It All Together

Incrementality testing transforms marketing from an exercise in correlation-spotting into genuine causal analysis. It answers the question every CFO asks: would these results have happened anyway? And it does so with scientific rigor, not assumptions or gut feeling.

The effort required is real. Designing proper tests, waiting for statistically significant results, and accepting that some of your "best performing" channels might not be as valuable as they appear—none of this is easy. But the alternative is worse: making million-dollar budget decisions based on attribution models that systematically overvalue lower-funnel tactics and undervalue upper-funnel brand building.

Start small. Choose one high-spend channel and run a simple holdout test. Calculate incremental ROAS alongside your standard ROAS. See the difference. That first test will change how you think about marketing measurement forever.

The foundation for effective incrementality testing is accurate, comprehensive tracking. You can't design meaningful tests if you don't know which users saw which ads and which users converted. You can't interpret results if your conversion tracking is incomplete or your attribution data is fragmented across platforms. Investing in performance marketing tracking software ensures you have the data quality needed for reliable tests.

This is where modern attribution platforms become essential. When you can track every touchpoint across every channel—from initial ad impression through CRM events to final purchase—you have the data infrastructure needed to design incrementality tests that actually answer your questions. You can create proper holdout groups, track conversions accurately across test and control, and analyze results with confidence.

Cometly's multi-touch attribution and analytics capabilities provide exactly this foundation. By capturing every touchpoint and connecting them to conversions in real time, Cometly gives you the complete customer journey data that makes incrementality testing actionable. You can see not just which channels show strong attribution, but which channels you should test for incrementality—and then act on those results with confidence.

Ready to elevate your marketing game with precision and confidence? Discover how Cometly's AI-driven recommendations can transform your ad strategy—Get your free demo today and start capturing every touchpoint to maximize your conversions.