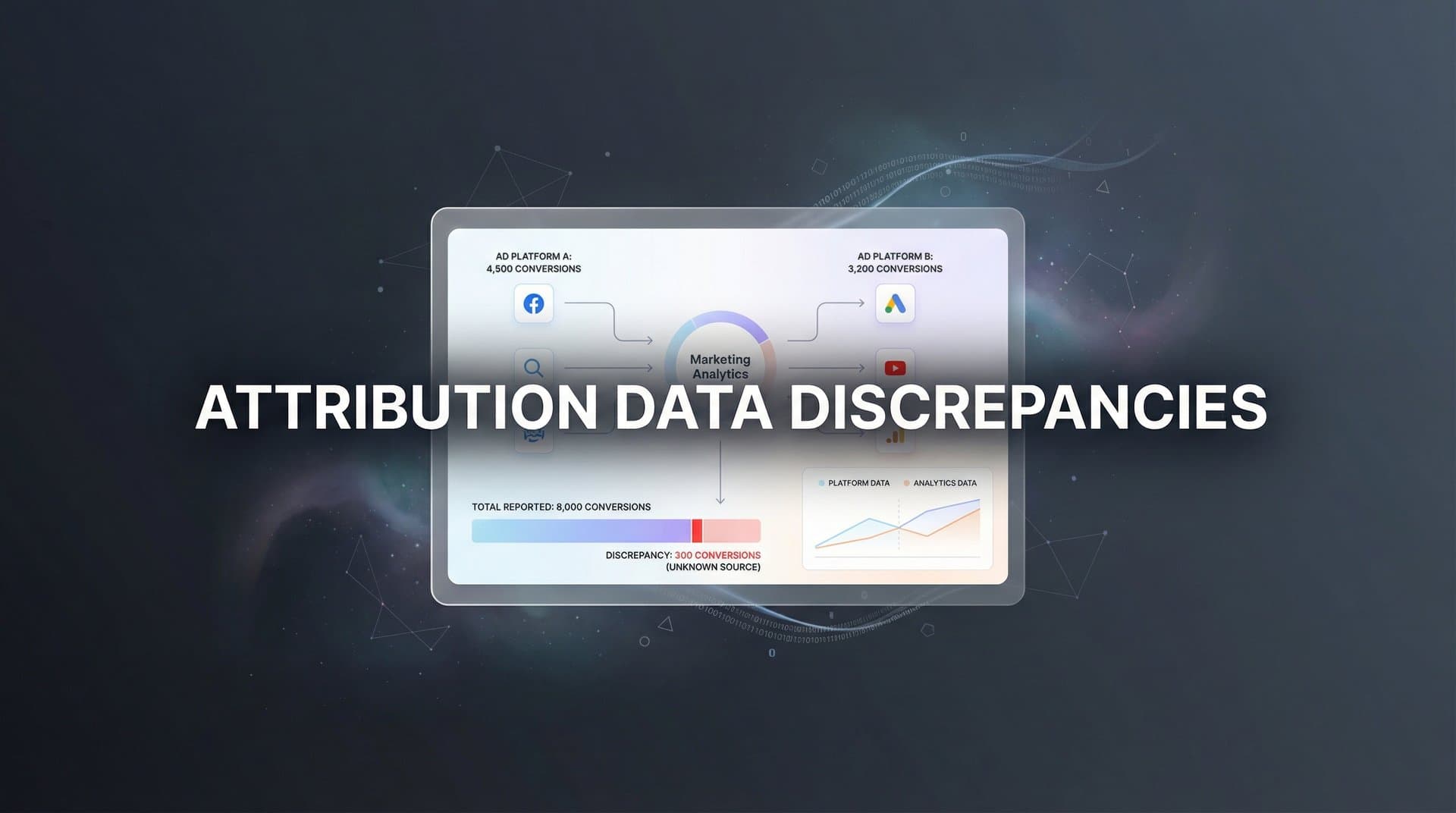

You open Facebook Ads Manager and see 247 conversions. Google Analytics shows 189. Your CRM reports 203. Same campaign. Same time period. Three completely different numbers.

If this sounds familiar, you're not alone. Attribution data discrepancies are one of the most frustrating realities of modern marketing—and they're not going away. The platforms you rely on to track performance are fundamentally designed to measure things differently, creating a maze of conflicting reports that can shake even the most confident marketer's trust in their data.

Here's the thing: these discrepancies aren't a bug in your tracking setup. They're a feature of how digital marketing measurement works in 2026. Different platforms use different attribution windows, tracking methods, and credit assignment logic. Each one is telling you a version of the truth—just not the whole truth.

This article will walk you through exactly why your marketing numbers don't match, what's really happening behind the scenes, and most importantly, how to build a measurement framework you can actually trust when making budget decisions.

The Anatomy of a Data Mismatch

Attribution data discrepancies occur when different platforms report conflicting conversion counts, revenue figures, or customer journey data for the same marketing activities. It's not that one platform is right and the others are wrong—they're each measuring reality through their own lens.

These discrepancies fall into three main categories. First, timing differences stem from attribution windows—the lookback period each platform uses to connect an ad interaction to a conversion. Facebook might credit conversions that happen within seven days of a click, while Google Ads extends that window to 30 days. The same customer action can fall inside one platform's window and outside another's, creating immediate variance.

Second, methodology differences reflect how platforms define a "valid" attribution event. Some platforms credit click-through conversions exclusively. Others include view-through conversions, giving credit when someone sees your ad but doesn't click, then converts later. A customer who saw your Facebook ad, clicked your Google ad, and then purchased creates a scenario where both platforms legitimately claim the conversion—based on their own tracking logic.

Third, tracking gaps represent lost data points that different tools handle differently. When a customer blocks cookies, switches devices mid-journey, or uses a browser that restricts tracking, each platform fills in those gaps using its own methodology—or doesn't fill them at all. Understanding why you're losing attribution data is the first step toward addressing these gaps.

Let's make this concrete. Imagine a customer sees your Facebook ad on Monday but doesn't click. Tuesday, they click a Google search ad and visit your site but don't buy. Wednesday, they receive your email and make a purchase. Facebook claims this conversion as a view-through attribution. Google claims it as a click-through conversion. Your email platform takes credit for the final touchpoint. Your CRM records one sale, but your marketing platforms report three conversions.

Each platform is technically correct within its own framework. The problem isn't the individual measurements—it's that adding them together creates a distorted picture of reality. This is the fundamental challenge of attribution in a multi-channel marketing world.

Why Ad Platforms and Analytics Tools Rarely Agree

Every ad platform operates with an inherent bias: it wants to prove its value. This isn't a conspiracy theory—it's basic business logic. Facebook, Google, TikTok, and every other advertising platform succeed when they can demonstrate that their ads drive results. This creates systematic over-counting when you sum conversion totals across channels.

Platform self-attribution works like this: each tool is designed to capture every possible conversion it might have influenced, then claim credit for it. The result is overlapping attribution where multiple platforms legitimately claim the same conversion based on their tracking rules. When you add up reported conversions across all your channels, the total often exceeds your actual conversions by significant margins—sometimes by 30% to 50% or more.

This gets amplified by technical tracking limitations that have intensified dramatically since Apple's iOS 14.5 update in 2021. App Tracking Transparency requires users to explicitly opt in to cross-app tracking, and the vast majority don't. When a customer browses Instagram on their iPhone, clicks your ad, then purchases on their laptop hours later, traditional tracking methods struggle to connect those dots. Privacy updates continue to impact how marketers handle losing attribution data from privacy updates.

Cookie deprecation compounds the problem. Third-party cookies—the backbone of cross-site tracking for years—are being phased out across browsers. Safari and Firefox already block them by default. Chrome has delayed its deprecation timeline, but the direction is clear. As these tracking mechanisms disappear, the blind spots in customer journey data expand.

Cross-device journeys create another layer of complexity. Modern customers don't follow linear paths. They research on mobile during their commute, compare options on their work computer, and purchase on their tablet at home. Implementing robust cross-device attribution tracking is essential for connecting these fragmented journeys.

Ad blockers throw another wrench into the system. Roughly 25% to 40% of internet users employ some form of ad blocking or tracking prevention. When these users interact with your marketing, many platforms simply don't see it. Some tools attempt to model this missing data, others ignore it entirely, and this inconsistency creates yet another source of discrepancy.

Then there's the attribution model variation problem. Last-click attribution gives 100% credit to the final touchpoint before conversion. First-click gives it all to the initial interaction. Linear attribution distributes credit evenly across all touchpoints. Data-driven attribution uses machine learning to assign credit based on each touchpoint's actual influence. Each approach produces different conversion counts and ROI calculations for the same campaigns.

Most platforms use their own proprietary attribution models by default, and they rarely disclose the exact logic. Google Ads might use a data-driven model for one campaign type and last-click for another. Facebook has its own attribution methodology that factors in view-through conversions differently than Google Analytics. When you compare reports, you're not just comparing data—you're comparing fundamentally different philosophical approaches to credit assignment. Understanding the Google Analytics attribution limitations helps explain why these tools often disagree.

The Hidden Costs of Ignoring Discrepancies

When your marketing data doesn't align, the immediate reaction is often to shrug it off. "Close enough," you tell yourself, and move on. But attribution data discrepancies carry real costs that compound over time, even when they seem manageable in the moment.

Budget misallocation is the most direct consequence. When you can't trust your conversion data, you risk systematically over-investing in channels that appear to perform well in their own reporting but don't actually drive incremental revenue. If Facebook reports 50% more conversions than actually occurred, and you use that data to justify increasing your Facebook budget by 50%, you've just misallocated a significant portion of your marketing spend based on inflated numbers.

The inverse happens too. Channels that play important assisting roles in the customer journey often get under-credited in last-click models. Your display campaigns might be generating valuable awareness that leads to conversions attributed to search, but if you only look at last-click data, those display campaigns appear to underperform. You cut budget from a channel that's actually driving results—just not in a way that traditional attribution captures.

Decision paralysis becomes chronic when data discrepancies persist. Marketing teams waste hours every week trying to reconcile reports, building spreadsheets to explain variance, and debating which platform's numbers to believe. Those hours could be spent optimizing campaigns, testing new creative, or analyzing customer behavior. Instead, they're spent on forensic accounting that rarely produces satisfying answers.

Stakeholder trust erodes when numbers don't match. When you present campaign results to executives or clients and the conversion counts don't align with what they see in the CRM, credibility suffers. Even when you can explain the technical reasons for discrepancies, the optics are problematic. "Our marketing platforms say we drove 500 conversions, but our actual sales data shows 350" is not a confidence-inspiring statement.

Perhaps most insidious is the compounding optimization error problem. Modern ad platforms rely heavily on conversion data to train their algorithms. When you feed Facebook or Google inaccurate conversion signals—whether over-counted due to attribution overlap or under-counted due to tracking gaps—you degrade the quality of their targeting over time. The algorithms optimize toward the wrong patterns, creating a negative feedback loop where your targeting gets progressively worse even as you increase spend.

This last point deserves emphasis. Ad platform AI is only as good as the data you feed it. When your conversion tracking is fragmented, delayed, or inconsistent, the machine learning models can't distinguish between high-value and low-value audiences effectively. You end up in a situation where increasing your budget doesn't scale results proportionally because the algorithm is optimizing toward a distorted version of reality.

Building a Single Source of Truth

The solution to attribution data discrepancies isn't choosing which platform to believe—it's building a unified measurement framework that captures the complete customer journey. This starts with addressing the technical foundation of how you track conversions in the first place.

Server-side tracking represents the most significant advancement in attribution accuracy in recent years. Traditional browser-based tracking relies on cookies and JavaScript tags that execute in the user's browser. These are vulnerable to ad blockers, cookie restrictions, and browser privacy features. Implementing first-party data tracking setup moves the data collection process to your own servers, where it's immune to client-side blocking.

Here's how it works: instead of your website sending conversion data directly from the user's browser to Facebook or Google, it sends that data to your server first. Your server then forwards the information to ad platforms using their server-side APIs. This approach recovers conversion data that would otherwise be lost to tracking prevention tools, typically improving data capture rates significantly.

But server-side tracking alone isn't enough. You need to connect the full customer journey across all touchpoints—ad clicks, website visits, email opens, CRM interactions, and actual purchases. This requires integrating data from your ad platforms, website analytics, marketing automation tools, and sales systems into a unified view.

Most companies approach this integration challenge in one of two ways. The first is building a custom attribution data warehouse that pulls information from all sources using APIs, then running attribution logic on top of that consolidated data. This gives you maximum control but requires significant engineering resources to build and maintain.

The second approach is using a dedicated attribution platform designed specifically to unify marketing data. These tools connect to your ad platforms, analytics, and CRM, then apply attribution models across the complete customer journey. The advantage is speed of implementation and pre-built integrations. The tradeoff is less customization than a fully custom solution.

Regardless of which approach you choose, the goal is the same: create a system where every touchpoint is captured and connected to actual revenue outcomes. When a customer clicks your Facebook ad, visits your site, receives an email, and then purchases, you want one unified record of that journey—not four disconnected data points across four different platforms.

Multi-touch attribution models for data become powerful once you have this unified data. Instead of relying on any single platform's self-reported conversions, you can apply attribution logic that distributes credit across all touchpoints based on their actual contribution. A time-decay model might give more weight to recent interactions. A position-based model might emphasize first and last touch. A data-driven model might use machine learning to determine influence.

The specific model matters less than the underlying principle: you're making attribution decisions based on complete journey data, not fragmented platform reports. This eliminates the overlapping attribution problem where multiple platforms claim the same conversion, and it surfaces the true incremental impact of each marketing channel.

When you feed this accurate, unified conversion data back to ad platforms through Conversion API integrations, you close the optimization loop. The platforms receive complete, accurate signals about what's actually driving conversions, enabling their algorithms to target and optimize effectively. This is where attribution accuracy translates directly into performance improvement.

Practical Steps to Reconcile Your Data Today

Building a perfect attribution system takes time, but you can start improving data consistency immediately with some practical reconciliation strategies. These won't eliminate discrepancies entirely, but they'll make your data more actionable while you work toward a more comprehensive solution.

Start by auditing your attribution windows across all platforms. Document the default lookback periods each tool uses—Facebook's 7-day click and 1-day view, Google Ads' 30-day click, Google Analytics' default session-based attribution. Where possible, standardize these windows to reduce variance. Many platforms allow you to customize attribution windows in their reporting interfaces. Setting consistent lookback periods won't eliminate discrepancies, but it removes one major source of variance.

For windows you can't standardize, create documentation that explains the differences. When you're comparing Facebook's 7-day attributed conversions to Google's 30-day numbers, you should expect Google to report more conversions from the same traffic. Understanding this systematically helps you interpret variance rather than being confused by it. For a deeper dive into these issues, explore our guide on common attribution challenges in marketing analytics.

Next, establish a primary conversion source as your source of truth for revenue data. For most businesses, this should be your CRM or e-commerce platform—the system that records actual transactions and revenue. Use this as your baseline for validation. When ad platforms report conversion totals, compare them to your CRM data to understand over-reporting patterns.

This doesn't mean platform data is useless. Ad platform conversion counts are valuable for optimization and relative performance comparison. But when you're making budget allocation decisions or reporting to stakeholders, anchor to your CRM numbers. Say "We generated 350 sales" (from CRM) rather than "Facebook drove 247 conversions and Google drove 189" (which adds up to more than your actual sales).

Create a discrepancy tolerance threshold to avoid analysis paralysis. Perfect alignment between platforms isn't realistic and shouldn't be your goal. Instead, define an acceptable variance range based on your business context. Many marketing teams use a 10-15% tolerance threshold. If platform-reported conversions are within 15% of CRM data, they accept the variance and move on. Only when discrepancies exceed this threshold do they investigate deeper.

This threshold approach keeps you focused on meaningful discrepancies rather than chasing down every minor variance. A 5% difference between Google Analytics and your CRM might simply reflect attribution window differences or a handful of test transactions. A 40% difference signals a real tracking problem that needs investigation. Learning how to fix attribution discrepancies in data becomes critical when variance exceeds acceptable thresholds.

Implement regular data quality checks as part of your reporting routine. Set up a weekly or monthly reconciliation process where you compare platform conversion totals to CRM data, document variance, and flag anomalies. This creates a paper trail that helps you spot trends—like gradual tracking degradation—before they become serious problems.

Finally, be transparent about discrepancies in your reporting. When presenting campaign results, acknowledge that platform numbers don't perfectly align with actual conversions, explain why, and anchor recommendations to your source of truth data. This transparency builds stakeholder trust and sets realistic expectations about attribution accuracy.

Moving Forward With Confidence

Attribution data discrepancies are a reality of modern marketing, not a reflection of your team's competence or your tracking setup's quality. Every marketer deals with conflicting conversion counts, and the challenge has intensified as privacy regulations and tracking restrictions have expanded. The platforms you rely on are designed to measure things differently, and perfect alignment simply isn't possible with fragmented tracking infrastructure.

The key insight is this: the solution isn't choosing which platform to believe. It's building a unified tracking system that captures the complete customer journey and serves as your single source of truth. When you connect ad platforms, website analytics, and CRM data into one coherent view, you eliminate the overlapping attribution problem and gain visibility into what's actually driving results.

Server-side tracking forms the technical foundation by recovering conversion data that browser-based tracking misses. Multi-touch attribution models provide the analytical framework for distributing credit fairly across touchpoints. Together, they enable you to make confident budget decisions based on complete data rather than fragmented platform reports.

This matters more than ever in 2026. As tracking restrictions continue to expand and customer journeys become increasingly complex, the gap between platform-reported performance and actual results will only widen for marketers relying on traditional tracking methods. The teams that invest in unified attribution infrastructure now will have a decisive advantage in optimization effectiveness and budget efficiency.

Accurate attribution data doesn't just help you understand past performance—it enables confident scaling decisions. When you know which channels and campaigns genuinely drive incremental revenue, you can increase spend strategically rather than guessing based on inflated platform metrics. You can feed better conversion data back to ad platform algorithms, improving their targeting and optimization over time. And you can report results to stakeholders with confidence, knowing your numbers reflect reality.

Ready to elevate your marketing game with precision and confidence? Discover how Cometly's AI-driven recommendations can transform your ad strategy—Get your free demo today and start capturing every touchpoint to maximize your conversions.